WIZER CTF #10: MESSAGES APP

If you're a returning visitor to our CTF Recaps, feel free to dive straight into the insights! For first-time explorers, let us quickly introduce you to the essence of these recaps. Wizer CTFs were introduced to challenge developers, encouraging them to adopt a hacker's mindset and thereby code more securely. This initiative is a pivotal part of our new security awareness training, specially crafted for development teams - Wizer's Secure Code Training for Developers!

After a challenge retires, our Wizer Wizard and CTO, Itzik Spitzen, crafts takeaways that offer valuable insights into the challenge, focusing on the defensive perspective for your script. Curious to test-drive a CTF before delving into the notes? Visit wizer-ctf.com – it's free, and there's something for all skill levels!

Goal

In this challenge, we identify a Stored DOM-based XSS vulnerability.

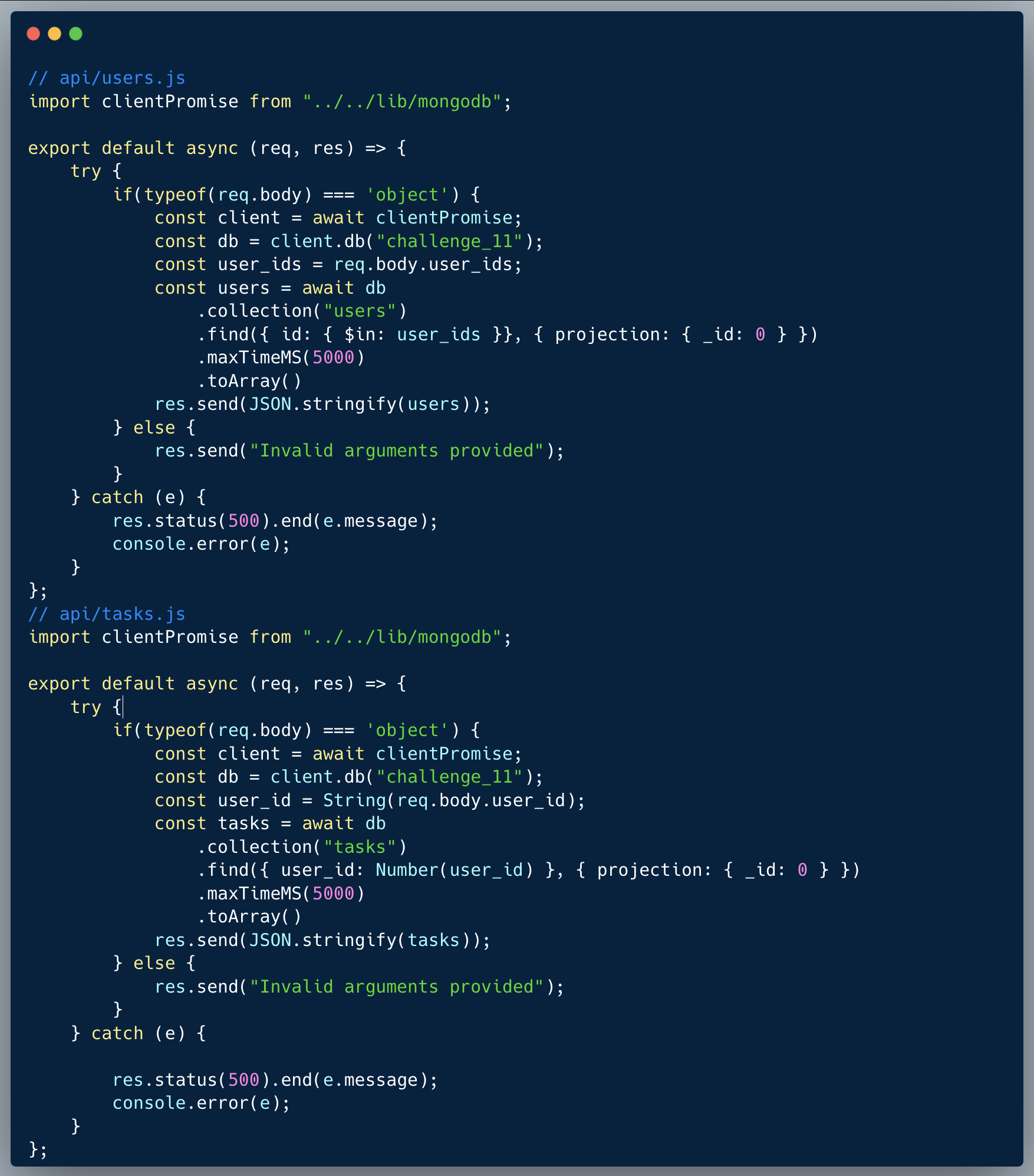

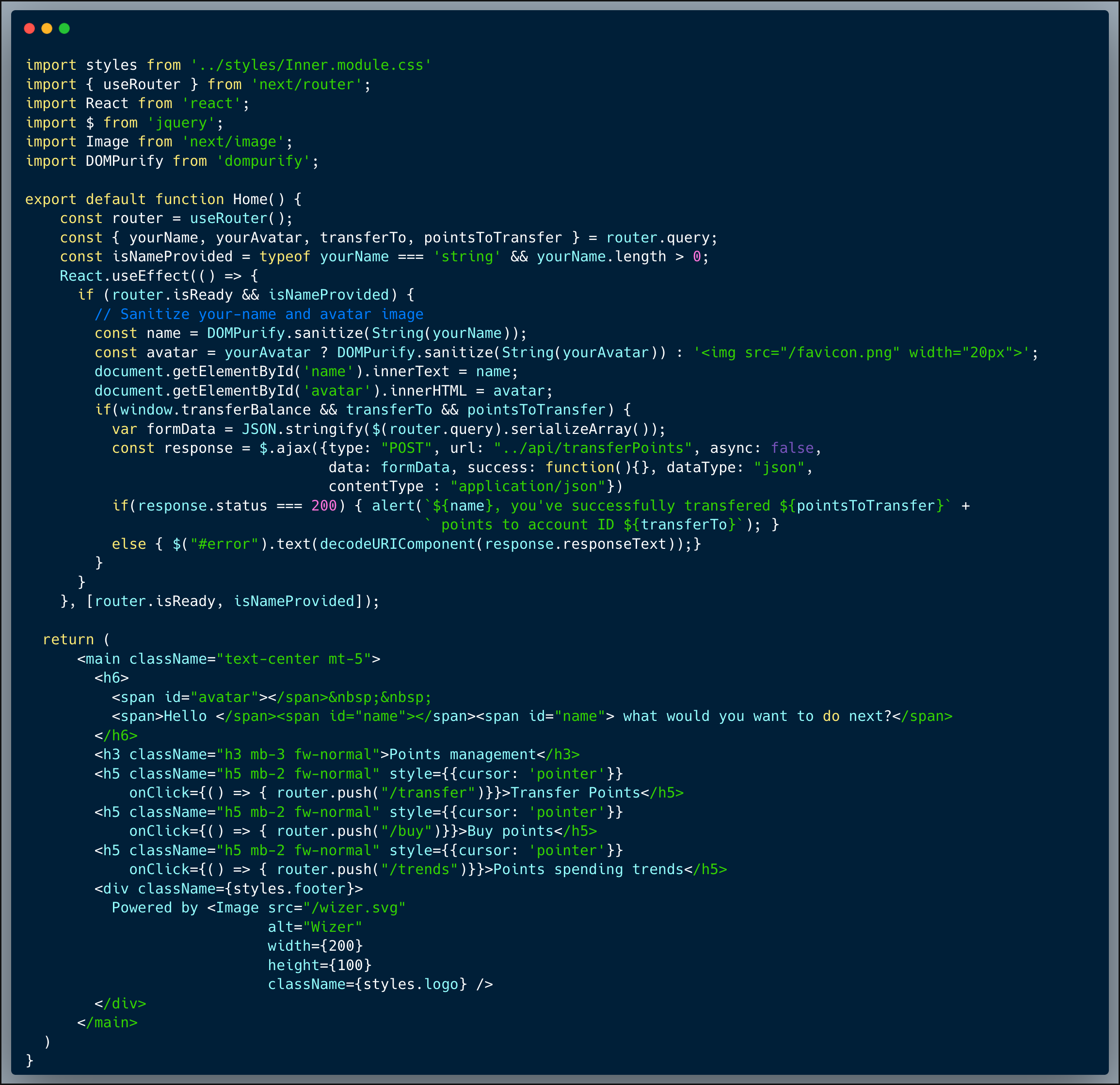

Description of code

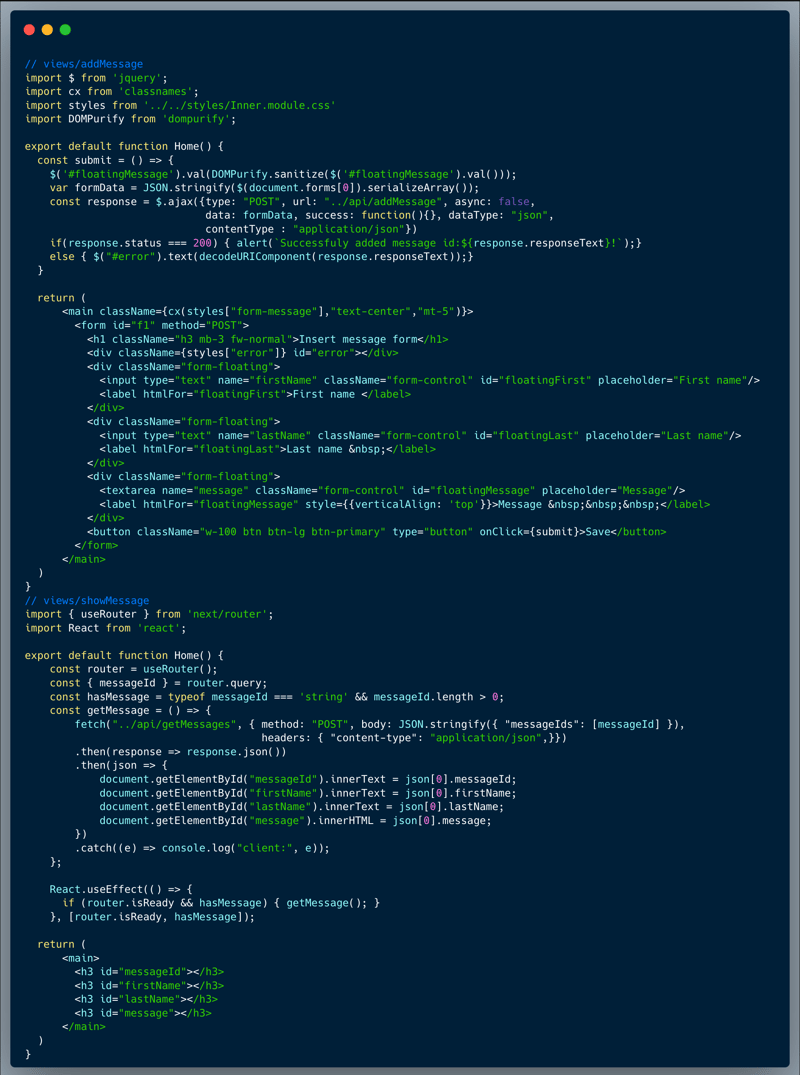

Our code is a message creating and viewing app. The front-end code below represents the two main user interfaces: message creation and message retrieval / viewing pages. When we want to show a message on the page, we fetch the messages from the API and then place the messageId, firstname, lastname and the message itself in the browser's DOM. For the first three, innerText is used, however for the message itself, innerHTML is used. This is called an unsafe sink, as it allows XSS if presented with untrusted data. So if we can find a way to control the message shown on the screen, we can achieve XSS. However, the developer of that front-end code, was aware of the risks and made sure that any saved message is sanitized, using the well known library DOMPurity.

What’s wrong with that approach?

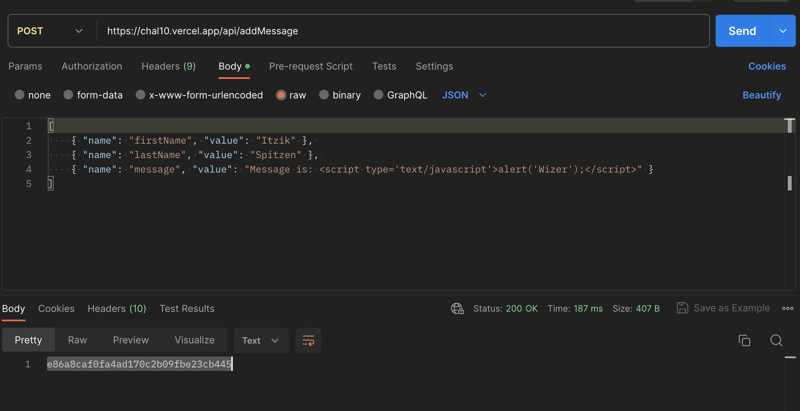

In this challenge, the only code you have access to review is the front-end code, which is what's running in the browser, and it seems nicely protected against XSS. However, what happens if an attacker bypasses that front-end and interacts directly with the back-end API? A closer look into the views/addMessage page, reveals that the service invoked is `../api/addMessage`. A quick validation using any of the API testing tools (i.e. Postman or Burpsuite) uncovers the vulnerability, where the API code isn't protecting against saving malicious HTML into the message. The image below shows an example of such API invocation using Postman, which is able to store an undesired, malicious message:

What would a successful XXS attack look like in this case?

An attacker who identifies the weakness, could easily create a message with malicious code which can be executed in a user context (in this case, all we need to capture the flag is to pop an alert with the text `Wizer`). Then once that message is saved in the system, the attacker would use some method such as a phishing email or any other social engineering trick, to make someone hit a link which includes that ID, as demonstrated below:

https://chal10.vercel.app/views/showMessage?messageId=[malicious id]

.This vulnerability is a specific subtype of XSS named Stored DOM-based XSS.

So what?

While the code injection required to capture the flag is absolutely harmless, once an XSS attack is possible, by using a phishing strategies or other social engineering techniques, they could cause someone to click the link with the payload and execute an attack to hijack session cookies and/or perform actions on their behalf. Attackers can make requests authenticated as the user (transfer money in a banking application for example) or send data back to them (make API calls and send all the resulting data back). It could escalate and be immensely harmful as well since once attackers are able to run Javascript within the context of a logged-in user it could serve as an entry point, they can then identify and exploit other vulnerabilities such as broken access control (a.k.a. IDOR), weak encryption / hashing and others to execute wider attacks such as taking over an admin account.

Main Takeaways:

- It isn't enough to sanitize inputs on the front-end code:

Always remember, attackers look beyond the front-end code, they almost don't care about what the front-end does or doesn't do. Their goto method, typically using tools like Burpsuite is to sniff the app communication and go straight to the backend and the APIs. Sanitizing the inputs on the front-end code is a nice to have which could potentially improves the user experience of the app, but definitely can't replace extra caution on the server side. - When working in a team, always collaborate your security concerns:

This code is a great example for something that could happen in a larger team. The front-end developer was aware of the potential risk, hence attempted to avoid it. However, the backend developer missed the risk, and hence skipped any sanitization. It doesn't matter what your role is, if you notice a vulnerability, share it with the other team members, and make sure they are aware of it as well, ask questions, don’t accept things at face value and as a black box. Team collaboration is extremely effective when it comes to security, and in fact, is very important for general product code quality.

Wanna join us on our next challenge? Sign up for our mailing list at wizer-ctf.com.

CODE WIZER!

Past Challenges

- From Owasp 10 To Advanced Techniques

- Deep-Dive 1 new topic monthly

-

Fun CTF Challenges to apply learning

Itzik Spitzen

Itzik is Wizer's CTO who brings his entrepreneurial spirit and C-level software strategy and technology innovations to the front. His experience in engineering leadership and process improving, technology strategy, product strategy and vision brings fresh insights and innovation to the Wizer platform. He is spear-heading a new initiative with Wizer CTFs challenging developers to learn the offensive in order to code more securely.